A little-known ancient alphabet can teach us a lot about Large Language Models (LLMs) and about ourselves.

The alphabet we use today is a modified Latin (or Roman) alphabet.

A thousand years ago, English (or Old English) was written in a different alphabet, now known as the “Futhorc.”

Each of the Futhorc’s letters had a meaning attributed to it. (The letter, or runstæf, ‘F’ meant “wealth,” for example.)

Ancient Vectors

In 2006, my paper “The Old English Rune Poem: Semantics, Structure, and Symmetry” was published in The Journal of Indo-European Studies. Written during the 8th or 9th century CE, the poem illustrates the meaning of each of the Futhorc’s letters.

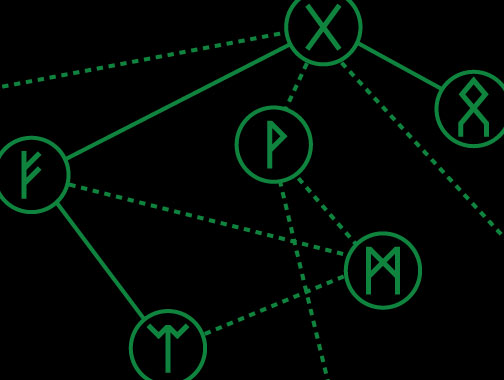

In my paper, I demonstrated that the poem has a chiastic, or symmetrical, structure, with the first stanza (and, thus, letter) relating to the last stanza (and letter), the second stanza (and letter) relating to the penultimate stanza (and letter), and so on.

We can discover other relationships between the stanzas. The first (‘F’), for example, speaks not only of wealth but of distributing or giving money away, charitably, while the seventh rune speaks of a charitable “gift” of money or support.

In other words, the poem isn’t meant to be read one stanza following the other (or, if you do read it like that, you won’t grasp the deeper meaning).

LLM Vectors

LLMs convert words into strings of numbers called “vectors.” The AI equivalent of map coordinates, these vectors plot words in a multidimensional framework.

Words that are frequently used together are plotted, as vectors, close to each other. Words that are rarely used together are plotted further away.

However, the connection is more than one of frequency. Each dimension refers to a quality: Is it biological? A substance? A home? And so on.

The LLM calculates the space between the words (or points) through context, similarity, and dissimilarity. Let’s look at the word “bee.”

- Bee and Hive: These words will be plotted close together because they share a context. (Bees make, and live in, beehives (or “hives”) and, hence, these words are often used together.) The LLM can calculate that when “bee” appears, “hive” is a high-probability neighbor.

- Honey and Pollen: The LLM can calculate a short distance between them because one is the raw material for the other. (Bees collect pollen.)

- Bee and Refrigerator: The distance between these two words would be massive. They do not share a context, and do not have any similarities (e.g., a bee is biological and a fridge is not).

So, while an LLM would be likely to predict that “bee” and “hive” would appear together, it would be unlikely to predict “bee” and “refrigerator” would.

With all words plotted as numerical vectors, LLMs are able to predict, one word at a time, the most probable word that should follow in any sequence (I.e., sentence or answer to a query).

As such, LLMs are closer to ancient methods of divination than to the kind of conditional (“if this, then that”) logic that we tend to associate with computer programs.

Divination by LLM

Deriving from the Latin word divinationem (meaning “prediction”), divination is the attempt to get information by non-logical means, often linked to religious or spiritual belief. One old and simple method of divination was bibliomancy: A person would ask a question, open a Bible at random, put their finger on a passage, and regard whatever it said as the answer (or, at least, as a clue to the answer) to their problem.

More famous methods of divination are the Chinese I-Ching and the Tarot (the latter of which has seeped increasingly into Western consciousness, in recent years, appearing even in a recent Dior fashion collection).

Such ancient methods aren’t as simple as they look. Notably, over 4,000 possible answers can be produced with the I-Ching.

Runes, likewise, are associated with divination. It is difficult to say how, historically, they were used in this regard (or if they were used for this purpose at all; many scholars would deny that they were in antiquity).

Let’s leave history aside. Today, intended for divination, sets of runes (usually carved into small wooden blocks) can be easily purchased.

Let’s just imagine that we have such a set. We have asked a question and, without looking, have picked out a rune.

We can contemplate its meaning (as per the Old English Rune Poem). We can think about the meaning of its opposite rune (because of the chiastic structure of the OERP). And we can think about other stanzas that talk about related things, even though those stanzas might not follow on logically. In a sense, in doing so, we are thinking about the connection of words and meanings in structural arrangements.

This, in effect, is what LLMs are doing.

If the question is complex enough, an LLM will give you a different answer every time you ask it. Probability, randomness, or chance is as present in the most modern AI technology as it is in ancient, “primitive,” divination.

Runes, LLMs, and Inner Knowledge

Some people read only at a surface level. They take things literally and don’t think about how things connect.

Other people find deeper connections.

This is the job of the creative thinker, whether that is a designer, artist, philosopher, or CEO.

(As far as I know, I am the first person to claim that the Old English Rune Poem has to be read symmetrically—and since the meanings of one or two runes have had to be guessed, it changes these.)

In spirituality, this deeper type of thinking is often called “esoteric,” meaning “inner” (from Greek esoterikos, meaning “belonging to an inner circle,” from esotero, “more within,” according to Etymonline).

It doesn’t mean that LLMs are thinking esoterically. LLMs use vectors to calculate the most probable words in a sequence.

In contrast, the Old English Rune Poem seems unconcerned with probability (unless we believe that the poem was, somehow intended to be used for divination) and focused instead on connections that illuminate meanings deeper than the average person could detect.

But, as we noted, like the I-Ching, Tarot, or other forms of divination, LLMs provide answers not necessarily grounded in facts but based on “probability.” That probability is based on patterns in the use of words.

Probability

Etymologically, the term “probability” is related to (1) “to probe” and (2) “probable.”

What is likely and what can be probed: Two ideas that seem, initially, to be only loosely related. Yet, they are closely connected in the ancient practice of divination—and, now, in the processes of LLMs.

If modern technology corporations are digitizing and creating a simulacra of human consciousness, LLMs are converging on the probable and the average.

In contrast, human genius has used what we might call “ancient Vectors” (or divination) to unearth the unlikely, the improbable, and previously unconsidered insights and strategies. They used it to “think different” (to quote Apple) and go deeper.

Ordinary V. Extraordinary “HITL”

As with ancient divination systems, it will depend on the “human in the loop” (HITL) whether we will get genius or average from any LLM.

AI will make Character and Craft even more important than before its emergence.

The average person will produce average results. They will produce “slop.”

But those who can go deeper, who think differently, who understand the human psyche, and who treat AI as a Craft to be explored, perfected, and refined, will create the extraordinary.

You must be logged in to post a comment.